The Cultural Imperative: AI in Digital Trust & Hospitality in Vacation Rentals

Posted on - May 12th 2026

As Artificial Intelligence transitions from a back-end utility to a front-facing collaborator, the technical hurdles of “capability” are being replaced by the social hurdles of “compatibility” and aligned cultural company values.

With company culture increasingly important due to distributed workers, technology layers, faceless websites, and OTAs’ disintermediation, the convergence of human and tech is now paramount to the brand.

We are no longer just asking if an AI can perform a task, but whether it can do so within the delicate mesh of human values, ethics, and social norms.

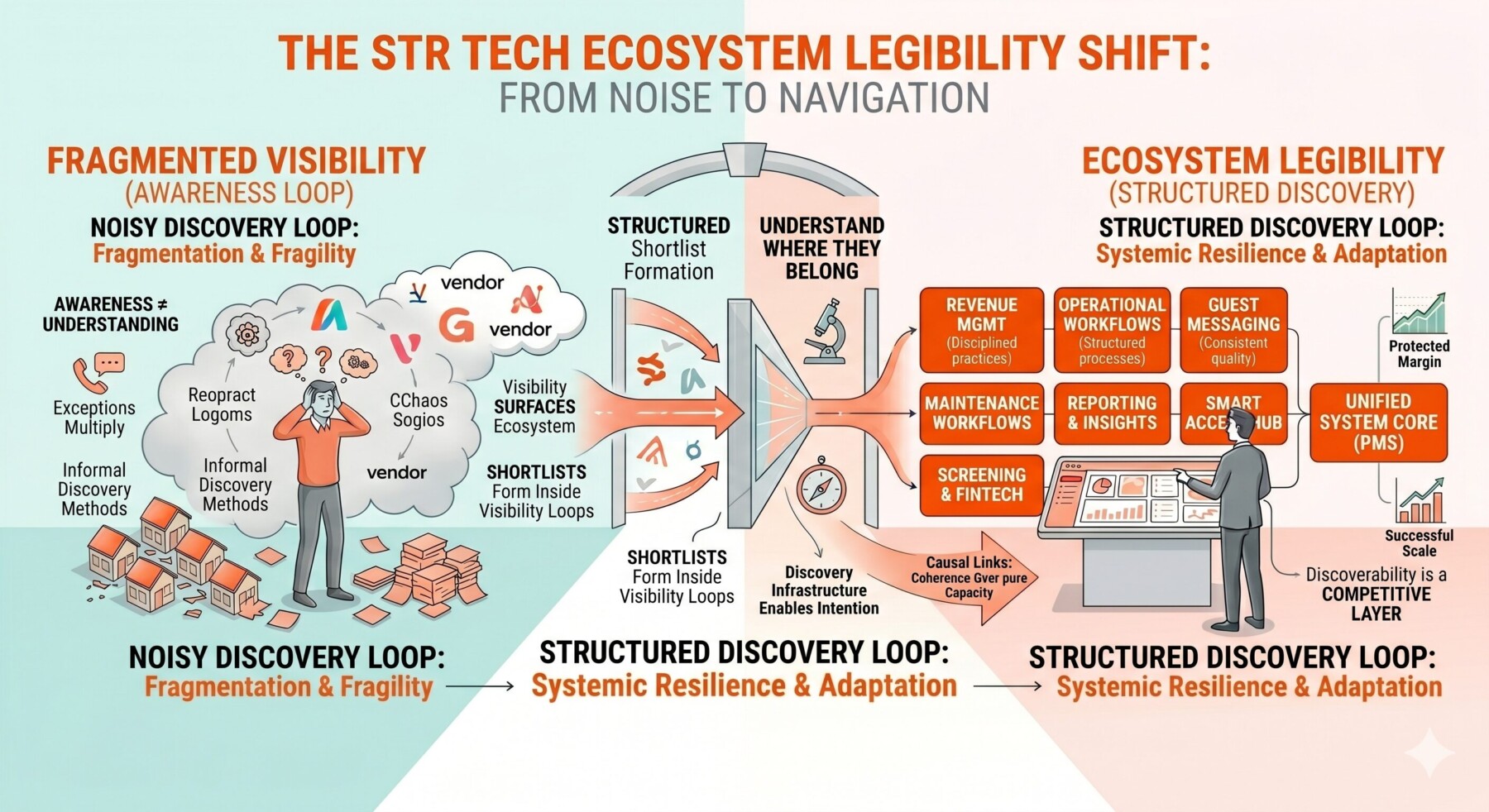

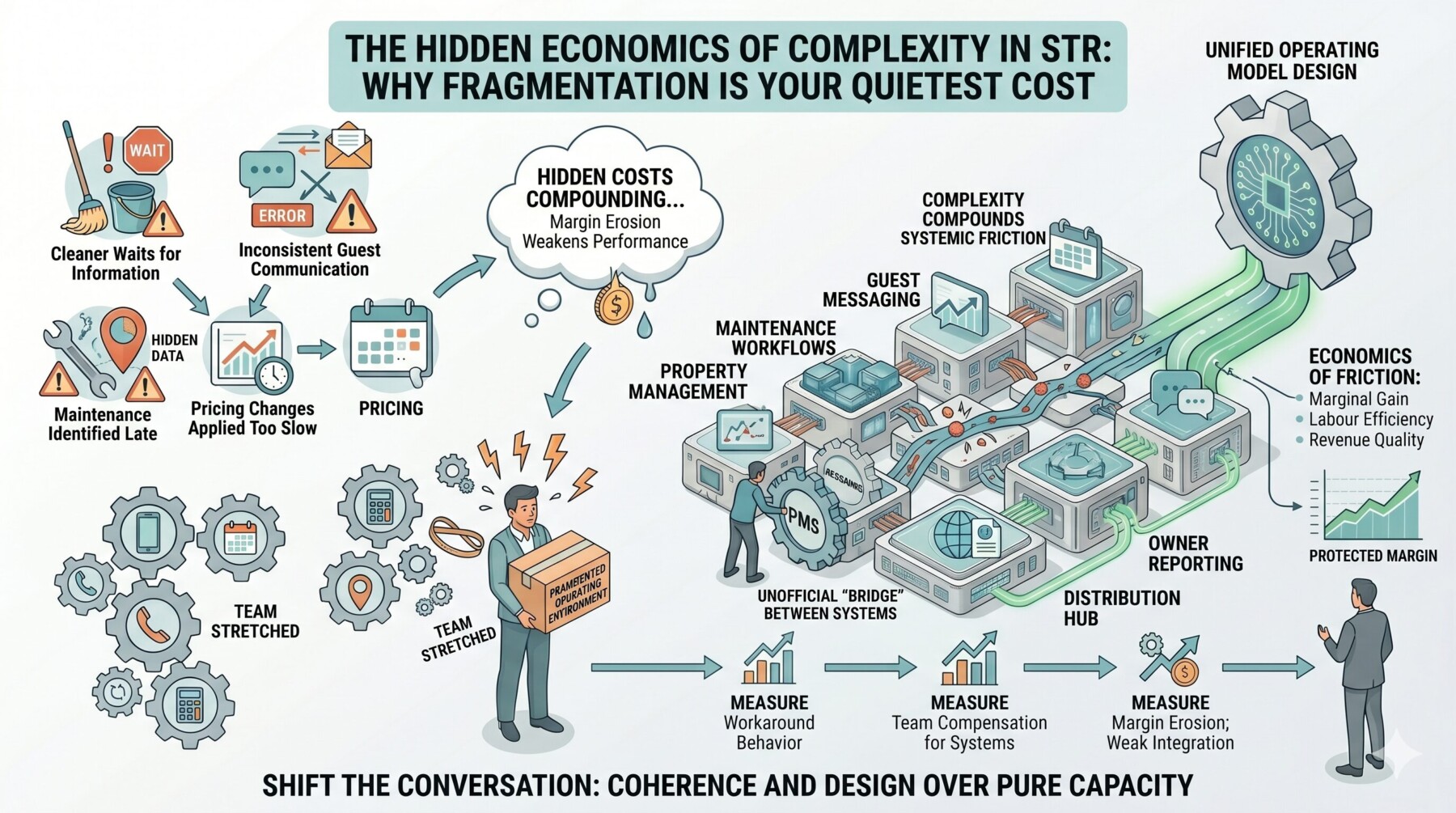

Despite the need for human and cultural alignment, the number of communication and automation tools in use continues to increase. There is nothing wrong with this, provided they are governed and managed correctly.

As margins are often thin and guest expectations rise, repetitive tool replacement is very important and frees up time to add that all-important human touch in the best businesses.

Recently, on a podcast, I predicted that a 100-property company will be run by one person, AI tools and eventually robots. This may not be appealing to many, but we are in a diverse market, where you can now book tiny pods at railway stations and airports and never see or talk to a human. These markets are transient and short-stay, with no families or pets; price and location are the primary concerns.

Vacation Rentals

Each market segment is different, of course and vacation rentals in particular need to address the AI and cultural challenges as pressure builds on companies to perform.

Cultural alignment is the process of ensuring AI behaviour reflects the diverse nuances of the humans it serves. Without it, we risk a “digital monoculture” in which AI systems default to the values of their creators or the dominant datasets they were trained on, inadvertently alienating global users and perpetuating systemic biases.

This can be damaging to a brand and its objectives. Staff and business culture may be great, but looking in from the outside through a poorly planned AI lens can be a disaster.

For any organisation looking to scale responsibly, treating cultural alignment as a primary objective is not just an ethical choice; it is a strategic necessity for long-term adoption and risk mitigation.

If you are a guest, you want to be treated as one; as an owner, you want the guest to enjoy their stay and not have any friction from looking to leaving. As a manager, it is their responsibility to match this three-pillar exercise.

Navigating the “Build vs. Buy” Dilemma for Managers

When integrating AI into your ecosystem, the challenge of alignment manifests differently depending on whether you are developing proprietary models or leveraging third-party agents. Both paths require a proactive, high-level strategy to ensure the AI speaks your brand’s language and respects your users’ values.

1. Working with Third-Party Agents (The “Buy” Approach)

Using pre-built models (like GPT-4, Claude, or specialised industry agents) offers speed, but at the cost of direct control over the “base” alignment. These models often arrive with a baked-in worldview.

The Strategy: Aggressive Constitutional Layering. You must treat the third-party model as a “raw engine.” To align it, you should implement a custom instruction layer (often called a System Prompt or “Constitution”) that explicitly defines the cultural boundaries and tone specific to your audience.

The Advice: Conduct “Red Teaming” specifically for cultural blind spots. Test the agent against regional idioms, local sensitivities, and specific ethical dilemmas to see where the third-party logic fails your local context. Don’t assume the provider’s “safety filters” are sufficient for your specific cultural niche.

2. Developing Proprietary Models (The “Build” Approach)

Building your own AI allows for deep alignment from the ground up, but it places the entire burden of ethical curation on your organisation.

The Strategy: Diverse Data Curation and RLHF. The most effective way to align a custom model is through “Reinforcement Learning from Humans”

Feedback (RLHF) using a diverse group of human trainers. If your trainers all share the same demographic or cultural background, your AI will too.

The Advice: Prioritise “Representational Data.” Ensure that your training sets include non-Western sources, minority perspectives, and multilingual nuances. The goal is to build a model that understands the context of a query, not just the text, preventing the AI from applying a “standardised” logic to a nuanced human problem.

The Path Forward: A Human-Centric Framework

Whether you build or buy, the goal is the same: Contextual Intelligence.

To address the challenge effectively, organisations should move beyond seeing AI as a static tool and treat it as a dynamic representation of their brand.

This requires continuous monitoring and a feedback loop where real-world cultural friction is reported, analysed, and used to refine the AI’s behaviour.

By centering human culture in AI development, we move past “functional” technology and toward “relatable” technology, the only kind that truly earns a place in the human experience.

Hospitality

Has an H for “Human”. We have been interacting with each other for millennia, and a simple technological leap is insufficient for flesh-and-blood beings, as we have many senses not yet entangled in the tech web, and we are all different yet special in so many ways.